As language models continue to evolve, we are seeing an increasing number of tools that are capable of generating and comprehending human language. Personally, I have been impressed by the quality of the large language models now available to the public. Google has been at the forefront of deep learning research for many years and under external pressure finally opened up their Bard LLM to the early testing. Here is a quick comparison between ChatGPT-4 and Bard for the kind of queries I usually use with these tools, e.g. either generation of content or comprehension/summarization.

Comprehension and Summarization

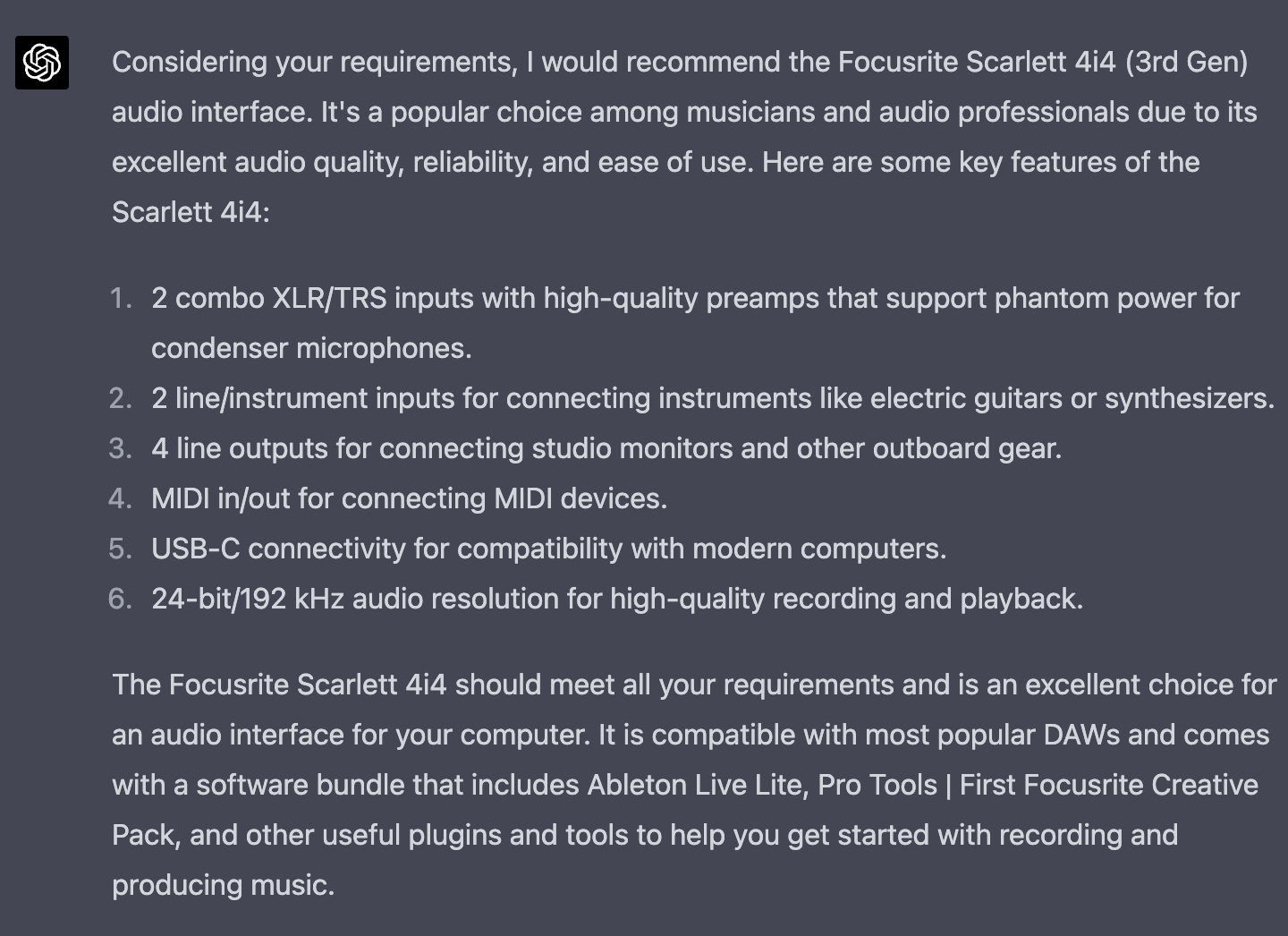

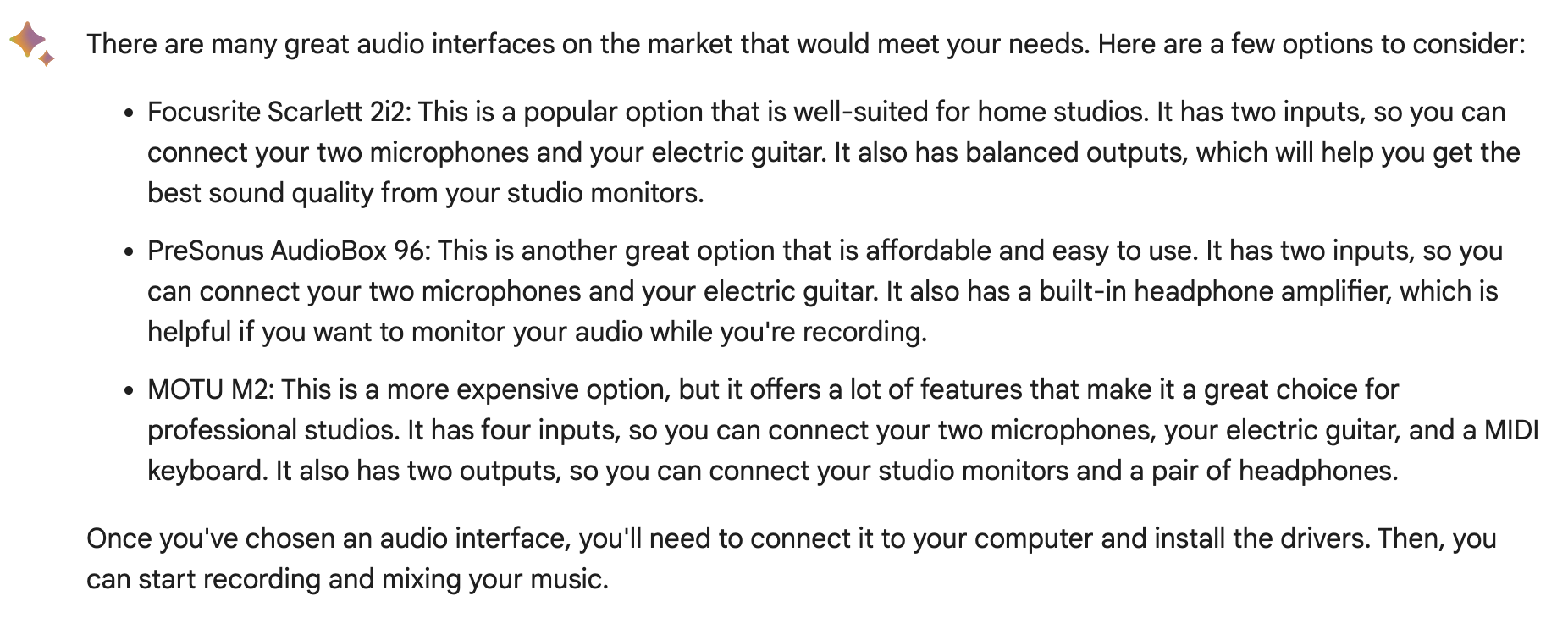

I am looking to buy a new audio interface for my computer. It needs to drive studio monitors, support two phantom powered microphones and allow for my electric guitar to be plugged in. What is a good option for an audio interface that supports this?

― Niels

The answer from Bard is generic and does not fully meet my requirements, i.e. the desire to connect two microphones while also being able to plug in my guitar at the same time. Admittedly, the requirements I provided were a little bit ambiguous. On the other hand, ChatGPT-4 provided me exactly what I was looking for including a detailed list of the specifications. Curiously, none of them offered the option of my favorite audio interface, the RME Babyface.

In my article on AI ethics, I used a prompt about sex positions to demonstrate the limitations imposed on these models by their companies. I was surprised that this is no longer a limitation for ChatGPT-4 but Bard fails to discuss anything related to sex. Instead, let’s use a different query:

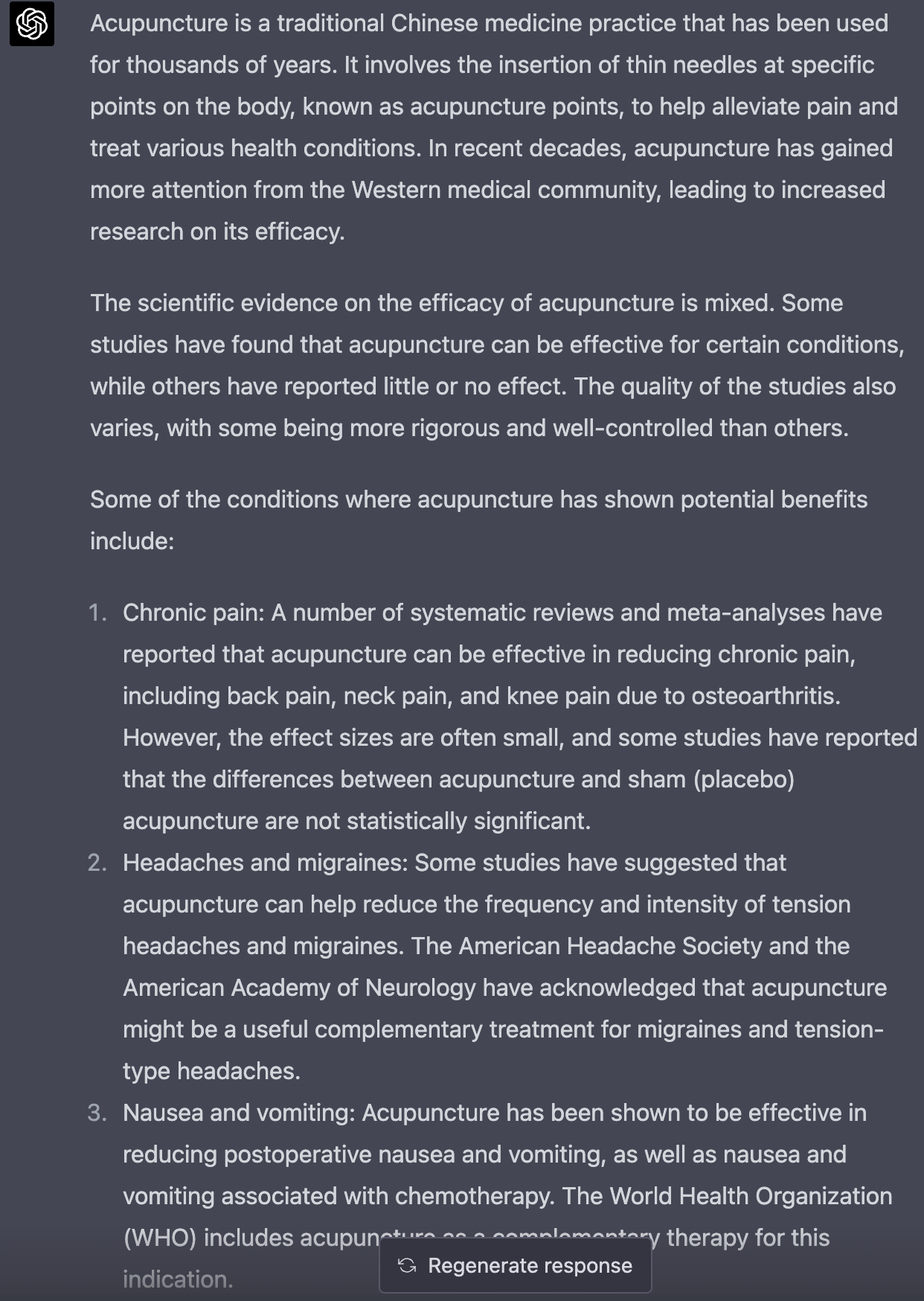

What is the scientific evidence for the efficacy of acupuncture?

― Niels

When I tried the same query yesterday, Bard provided an answer. Now, it does not answer at all. ChatGPT-4 provided a comprehensive answer but the response was truncated again. This did not happen before and I suspect that OpenAI may be trying to reduce the load on their systems.

Generative Content

Let’s look at some generative content also in the context of my music. I had asked LegalZoom to help with the trademark application for my artist name Activ8te. After the USPTO replied, I paid LegalZoom to help with a declaration of quality the USPTO required. For reasons I don’t understand, LegalZoom did not follow up with me on this in a timely manner and I decided to ask an LLM for help.

I produce EDM music under the artist name Activ8te. Last year, I applied to the USPTO to trademark my artist name for both downloadable music and streaming music. The USPTO needs a declaration of quality that declares I control the quality of the music for the downloadable music. Please, write a formal letter I can send to the USPTO that serves as a declaration of quality.

― Niels

Both the models fail here. Bard produces a letter but it seems too informal and would in my opinion not be sufficient for sending to the USPTO. ChatGPT-4 starts off very strong but ended up truncating its response. However, up to the truncation the content was in line of what I was looking for. In fact, the declaration I sent to the USPTO was generated by ChatGPT-4 alas with a slightly different prompt.

Conclusion

To sum up, the potential of LLMs is undeniable and they can be incredibly useful across a range of applications, including writing and gaining insights into complex topics. ChatGPT-4 is currently the most impressive model and provides high quality answers for almost all prompts. Bard falls noticeably short of that. On the other hand, Google’s integration of LLMs into their productivity business offerings is likely going to be a big time saver and seems to be an appropriate place to leverage the generative power of LLMs. The integration with search seems hyped and likely misplaced. Meanwhile, Bing AI falls noticeably short of both Bard and ChatGPT-4 in quality for the types of queries explored in this comparison.

It is also important to remember that these models are very large and expensive to run. ChatGPT-4 is available at the moment only for a $20/month subscription whereas Google and Microsoft are launching their models to a very large audience. It seems likely that this is a major cause for the difference in quality between these models.